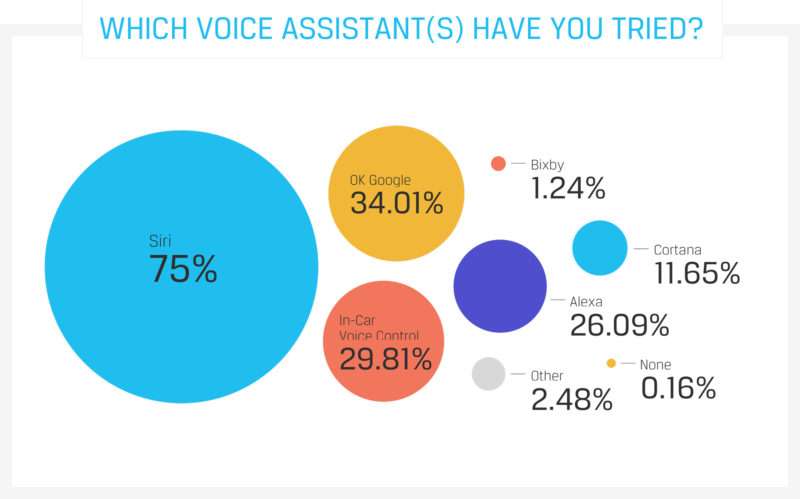

Voice technology like Siri, Amazon Echo, and Google Home has changed the way we interact with our smartphones and smart speakers.

Technology is all around us, and all you need now is your voice to interact with it.

In this article, we’ll review the changing landscape of voice app development and how you can tap into voice technology for your own mobile application.

Table of Contents

- What is a Voice Mobile App

- Automatic Speech Recognition (ASR)

- Natural Language Understanding (NLU)

- Voice User Interface (VUI)

- Types of Voice

- How it Works

- The Importance of Voice Technology

- Designing VUI

- Users’ Expectations

- Guideline #1: Provide Users with the Right Information

- Guideline #2: Let Users Know Where They Are

- Guideline #3: Utilize Visual Feedback

- Guideline #4: Give Examples

- Voice Limitations

- User Experience

- Loud Surroundings

- Linguistic Challenges

Voice apps use voice and speech recognition to allow users to interact with their devices and accomplish tasks.

They enable users to perform a wide range of functions, like setting alarms, checking the weather, playing music, and more, by simply speaking a command or request.

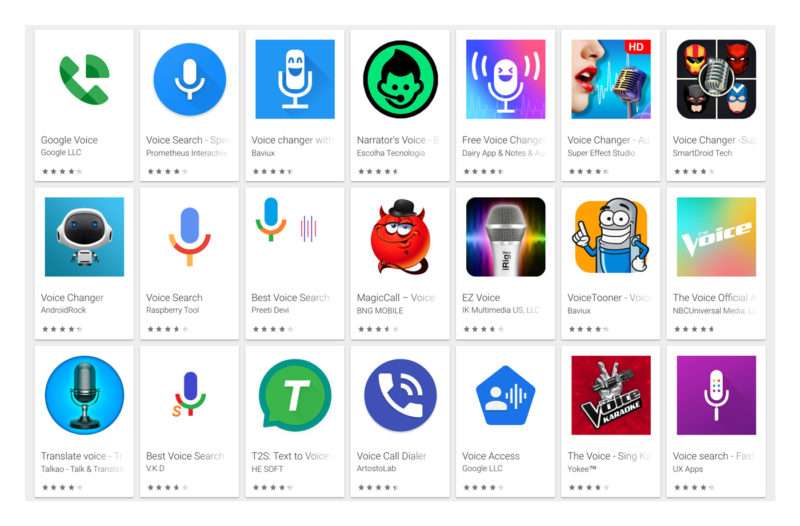

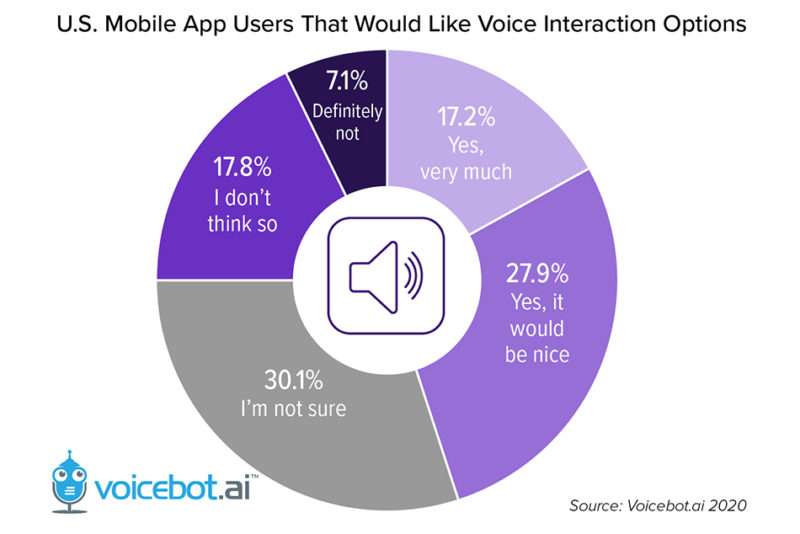

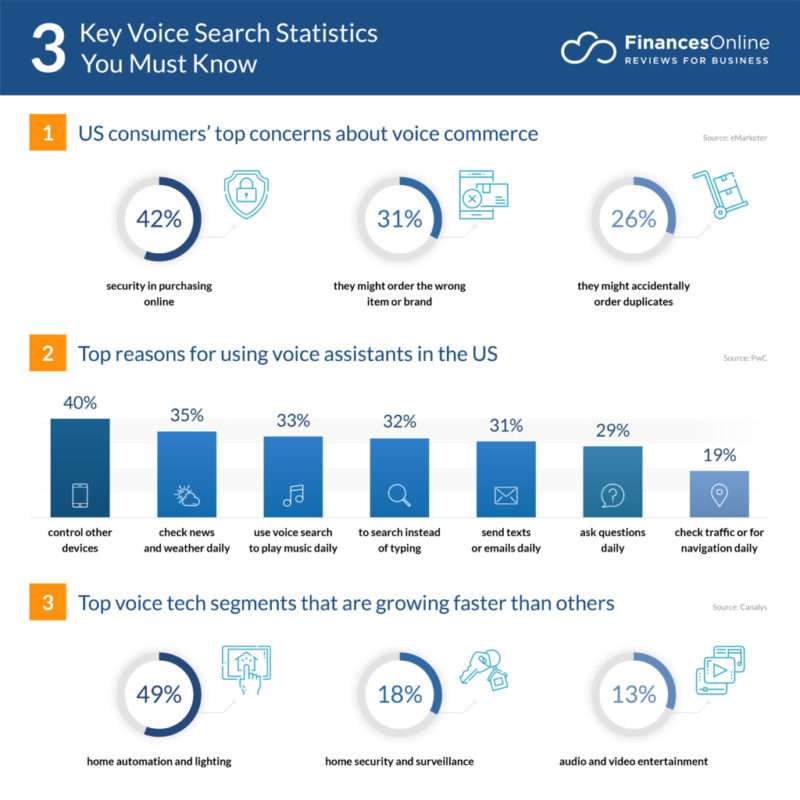

The popularity of voice apps is on the rise, as people increasingly seek more convenient and intuitive ways to interact with technology.

This trend is fueled by the proliferation of smart devices, which can be controlled using voice-enabled technology.

As a result, developers are focusing on creating user-friendly voice apps that are tailored to the specific needs of their users, with the goal of making voice interaction the primary way in which we interact with technology.

Photo Credit: punchcut.com

1.1 Automatic Speech Recognition (ASR)

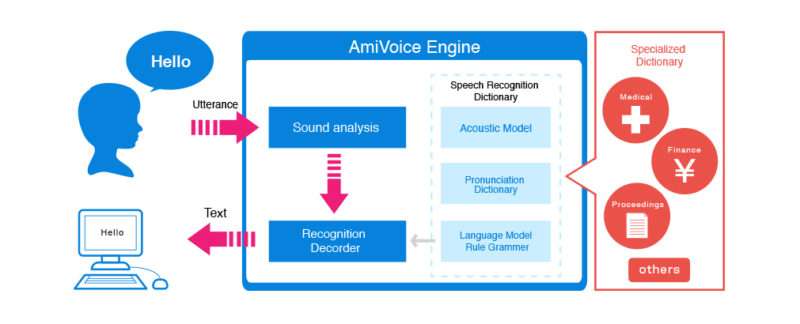

Automatic Speech Recognition (ASR) is a technology that enables a computer to transcribe human speech into text.

It uses complex algorithms and statistical models to analyze audio recordings and convert spoken words into written text.

ASR is used in a wide range of applications, like virtual assistants, dictation software, and language translation services.

It can be used to transcribe live speech in real-time or to transcribe pre-recorded audio files.

ASR tech still faces some challenges, like accurately recognizing different accents, understanding contextual nuances, and dealing with background noise.

As a result, continuous advancements in ASR are being made to improve its accuracy and efficiency.

1.2 Natural Language Understanding (NLU)

Similar to ASR, NLU is a subtype of voice software. However, unlike ASR, NLU looks to understand the meaning of the words.

It takes key phrases and attempts to make sense of the context surrounding them. Search results returned by NLU endeavors to deliver exactly what you want.

An example of this would be Google Search’s voice-based feature which works by attempting to make sense of the words in order to return more accurate results.

Photo Credit: digitaltrends.com

PRO TIP:

network, will improve and learn over time.

1.3 Voice User Interface (VUI)

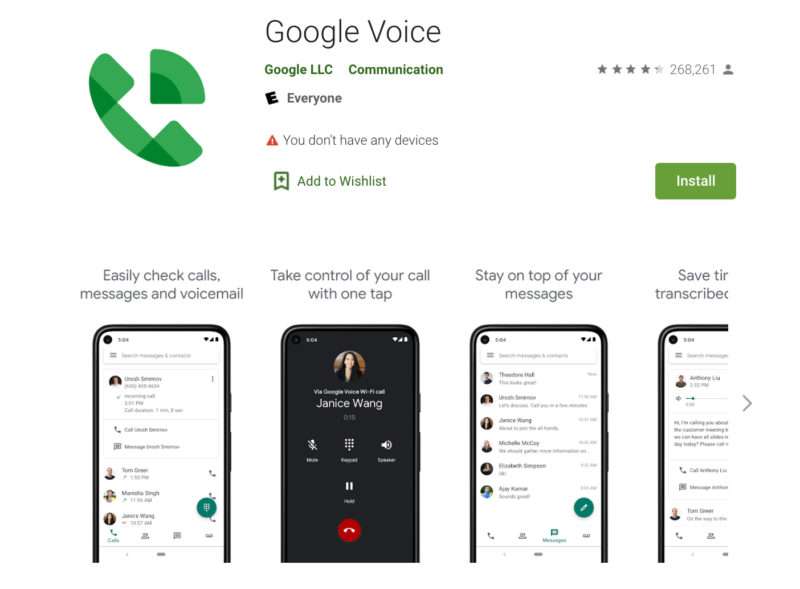

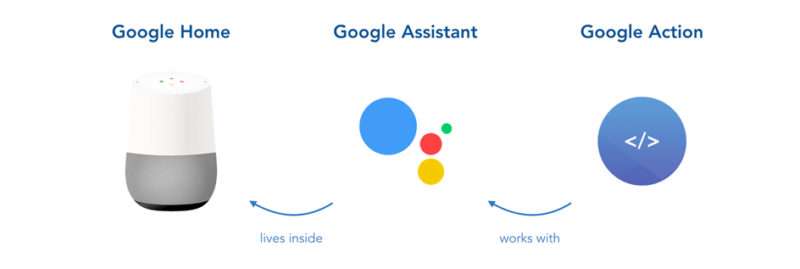

VUI is voice user interface, and is part of a whole class of UI that’s considered to be so natural that users don’t even notice it.

VUI allows users to interact with a specific system or software through speech commands. Prime examples of VUI include Alexa, Siri, and Google Assistant.

These are incredibly popular because they allow for a hands-free and eye-free way to interact with one thing while doing something else.

Photo Credit: ptpinc.com

This is a completely different user interface than what people are accustomed to when using regular mobile apps, which often stick with a standardized set of guidelines that feels natural and intuitive for users.

When designing VUI actions, it’s crucial that the possible actions are understood by users in a way they can remember, without bombarding them with too much information.

We’ll get into VUI more in chapter 3.

1.4 Types of Voice

While you may see the terms used interchangeably, there’s actually a difference between voice recognition and speech recognition.

Voice recognition often refers to recognizing a specific person’s speech, which is typically for security purposes.

Speech recognition has to do with recognizing patterns in speech and words and extracting meaning from it.

Voice software refers to the overall field where spoken sounds and speech have the ability to control devices, such as apps.

1.5 How it Works

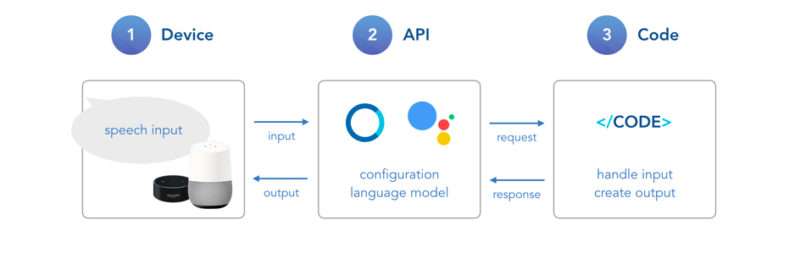

How it works is the software takes a given sound and processes it in order to take a specific action. Neural networks will break these sounds into smaller pieces to help interpret their meaning.

To activate Amazon’s Alexa, for example, you would need to speak its “wake phrase” which is “Aexa”.

Photo Credit: mantralabsglobal.com

This switches on the device’s voice audio and sends the snippets to a deep neural network, which determines if you said the correct wake phrase or not.

Voiced-based software often requires an internet connection and uses the cloud to transcribe and translate text.

Leveraging voice technology means never having to touch or interact with a screen.

And while it doesn’t always make sense to replace touch experiences with voice, there are indeed many instances where voice technology is incredibly beneficial.

A primary example is when people aren’t able to easily access their device. They may be jogging, cooking, or they simply may have their hands full and can’t interact with a device.

All they would need to do is speak a word or wake phrase to get the news, weather, music, and more.

We already make use of our other senses like touch, sight, and hearing, to interact with the devices around us, so voice is a logical next step (who knows what our sense of taste will have in store for us in the future!).

Voice has become a popular interface because it extends the possibilities that were capable of normal UI.

It can also simplify our digital world. When conducting a basic text search, you’ll find that there are pages and pages of results. But with voice UI, the software has to curate your options.

If you ask Siri or Alexa how to make a peanut butter and jelly sandwich through a voice search, the voice UI isn’t going to list off a million results on the topic. It’s going to carefully choose what it thinks is the most relevant answer and deliver that to you.

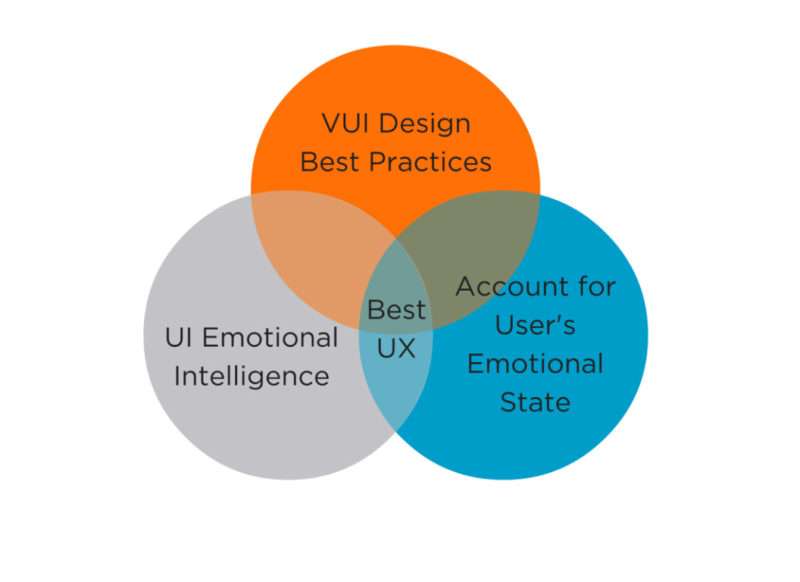

Voice user interface has opened up an entirely new user experience that comes with its own set of best practices.

When voice app developers create a voice app, they can’t apply the same design principles as they would with a typical mobile app’s graphical user interface.

Photo Credit: punchcut.com

It’s simply impossible. With VUI, there aren’t any visual components like there are with mobile apps, which have navigation menus, images, buttons, and so on.

Not only do VUI apps need to have a superior understanding of the spoken language and the ability to interpret speech meaning and context in a way that provides users with value, but they also need to teach users how to interact with the voice app and what commands they can use.

3.1 Users’ Expectations

While VUI is relatively new technology, users have high, and often unrealistic, expectations for how they can communicate with it.

Just a quick look at some reviews on the Amazon Echo will demonstrate how many users form bonds with their speaker, as if it’s a pet rather than a piece of software.

In short, voice applications can’t quite live up to users’ expectations of having a normal and natural conversation, which is what makes VUI design so important.

It needs to have the right amount of information.

Let’s highlight some key guidelines you should follow when designing a voice app.

3.2 Guideline #1: Provide Users with the Right Information

With a mobile app, you can display various options to users through the graphical user interface. But this isn’t possible with a voice interface.

The first thing to remember is that you never want to bombard users with all the information about your app. You need to decide what information is most important to get them using the voice software.

Provide users with key options for voice interaction. For example, a weather app might say something like: “You can ask for the weather forecast today or you can ask for a weekly weather forecast.”

3.3 Guideline #2: Let Users Know Where They Are

You probably have noticed that when you ask a voice interface a question like: “What’s the weather like today?”, it won’t answer “80% chance of rain.”

It’ll answer: “Today’s weather forecast shows an 80% chance of rain.”

As you can see, the interface reiterates the question so a user understands the exact functionality they’re using.

PRO TIP:

There’s no visual guidance when it comes to voice interaction, so getting lost can happen easily. It’s crucial to always inform users what functionality they’re using and how to exit it.

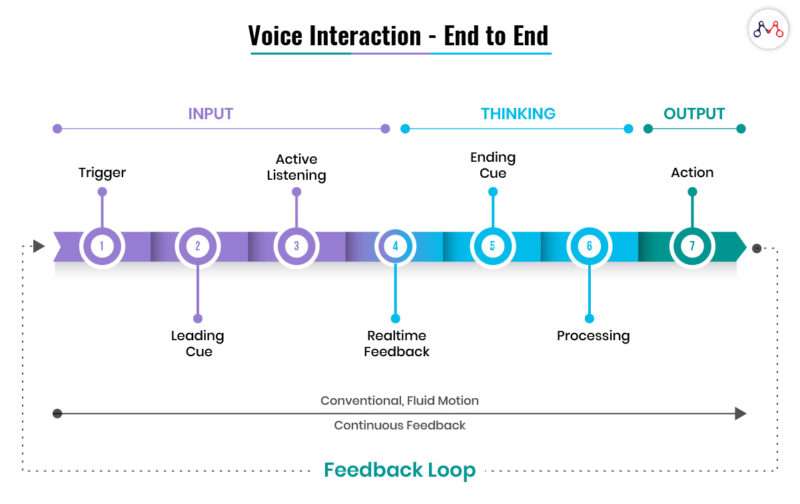

3.4 Guideline #3: Utilize Visual Feedback

Let users know when your voice software is listening.

There’s nothing more frustrating than speaking when no one is listening, so there should always be some kind of cue or visual feedback that lets users know that the software is listening.

You can see this with the Amazon Echo which has a blue light that lights up on the device when the wake phrase “Alexa” is spoken.

Some voice assistants and voice software will have a noise, like a ding, that lets users know when the software is listening.

3.5 Guideline #4: Give Examples

As already stated, visual interfaces can sometimes be tricky to navigate as a user, especially when there is no graphical user interface.

Users must express their intentions clearly with devices like Amazon Echo or software like a voice chat app. They can’t take shortcuts or hint at what they want.

This is why it’s important to offer users with examples on how to get the most valuable information back to them when they ask a question.

If you have an Amazon Echo, you’ll notice that the voice interface will sometimes ask “You can ask me…” and then it’ll tell you how you can ask a question to get the most out of it.

While voice software and voice interfaces have come a long way over the years, incorporating new technologies like machine learning and AI to improve, it still has its limitations.

4.1 User Experience

Initiating voice through a touch interface can be a pain. So can this phrase: “Sorry, I don’t understand that.”

Voice apps and interfaces need to be able to support a wide variety of commands, and while the apps are improving, they just don’t work all the time which can result in a loss of trust by users.

4.2 Loud Surroundings

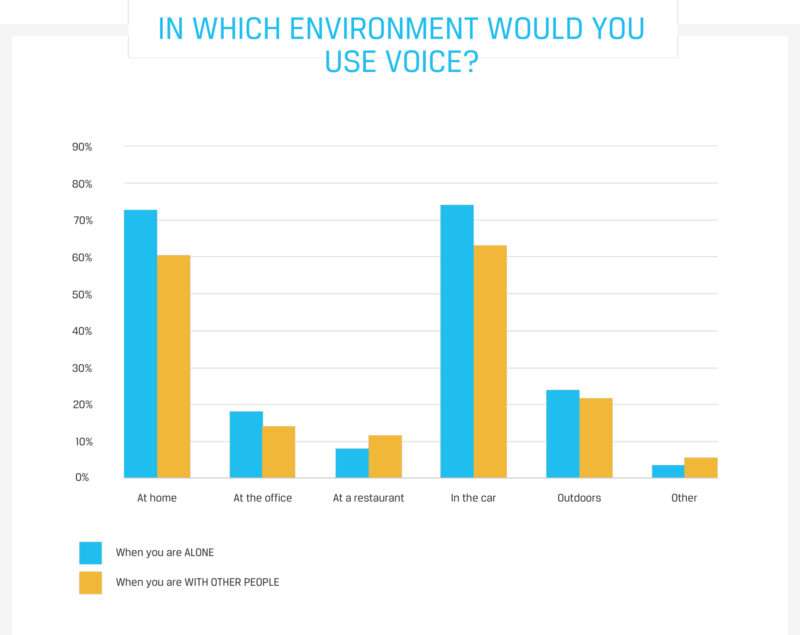

When we’re using voice software, it’s common to be out and about and not always in a quiet place. Loud surroundings and noise still pose an issue for voice interfaces.

Things like speech restoration and noise reduction still have a way to go.

Photo Credit: punchcut.com

4.3 Linguistic Challenges

Natural language learning has come a long way, but you have to remember that language is a very complex thing.

People pronounce words in different ways. They have accents. In short, there are a lot of nuances to language that voice algorithms still haven’t quite mastered.

Design is key in the voice app development process. Users should be given the information they need that will help them accomplish various tasks.

The last thing you want is for your target audience to feel frustrated or overwhelmed when using your voice software.

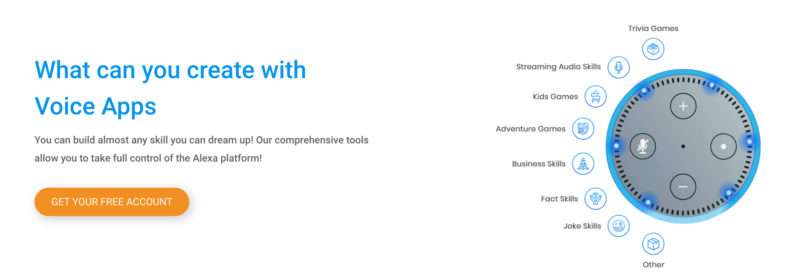

Creating a voice application isn’t anywhere near the same as creating a regular mobile app. In our Simple Starter package, we can help sort through your ideas to focus on a core set of features and lay the groundwork for future app development.

What’s your favorite voice assistant app, and how does it do a better job than the competition?